The numbers are staggering. But so are the emerging questions underneath them. It has been a jaw-dropping few months for Anthropic. At the end of 2025, the company was running at roughly $9B in annualized revenue. By March 2026, that number had climbed to approximately $19B, and as of this week, April 6, 2026, Anthropic surpassed $30B run rate revenue, which was confirmed by the company itself in conjunction with a landmark compute deal with Google and Broadcom for multiple gigawatts of next-generation TPU capacity.

Let that sink in: Anthropic added roughly $11B in annualized revenue in just 34 days. Of all the software public company IPOs in the last decade, there are 0 companies that have achieved this level of growth. The Information highlights a case where they could see Anthropic hitting $100B by the end of the year!

Claude is the engine behind most of this growth, and by most measures, it has become one of the most capable and widely adopted AI tools ever built. We sure love using it. But as we watch those numbers accelerate, another set of questions starts to emerge. Is this growth sustainable? What does it actually cost to run Claude, and who bears that cost now, and in the long term? As the open-source model ecosystem explodes around Anthropic, how durable is the era of “Claude for everything”?

Founded several years after OpenAI and having raised a fraction of the capital, Anthropic’s ascent to a $30B run rate revenue is a reminder that product focus and a differentiated perspective can outrun a head start. Not only have they raised less, they’ve also burned significantly less. The AI race moves fast enough to make last year look like ancient history. OpenAI was the darling not long ago, but today the landscape looks meaningfully different and is still shifting.

So what does this all mean for pricing, and what can we expect for the broader ecosystem that is using all of this Claude?

How Claude Actually Charges Today

Before we can understand the growth story, we need to understand the pricing architecture underneath it. Claude is available across four main tiers:

- Free: Web, iOS, Android, and desktop access. Limited daily usage. No Claude Code.

- Pro ($20/month): 5x the Free capacity, priority access, and Claude Code bundled in. The sweet spot for individual professionals.

- Max ($100–$200/month): Two variants: 5x Pro ($100/month) and 20x Pro ($200/month). Persistent memory, early feature access, and the highest priority access during peak times. Built for power users running Claude nearly continuously.

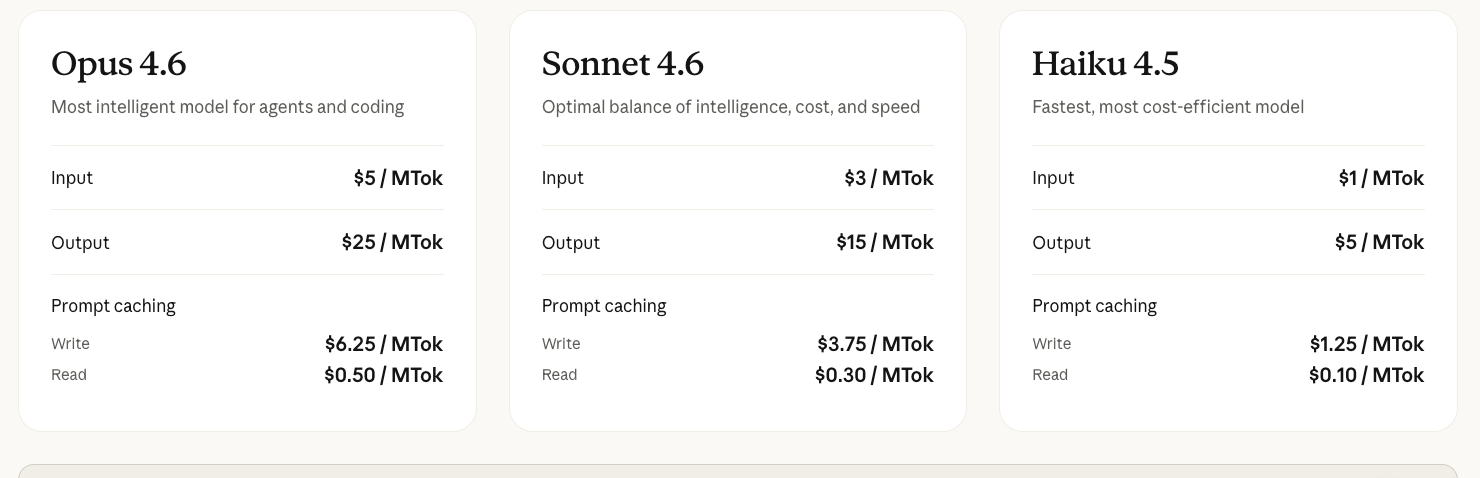

- Developer API (pay-per-token) — The pricing that powers the enterprise numbers. As of April 2026:

- Claude Haiku 4.5: $1 input / $5 output per million tokens

- Claude Sonnet 4.6: $3 input / $15 output per million tokens

- Claude Opus 4.6: $5 input / $25 output per million tokens

The distinction that matters most for understanding Anthropic’s growth is the one between consumer and enterprise. Individual subscribers pay a flat monthly fee with pooled usage. Enterprise customers (on Team or custom Enterprise plans) pay per token on top of a seat fee, with no included usage cushion. Every call is metered. That means the 1,000+ enterprise customers each spending $1M+ annually are almost entirely driving token-based revenue, not seat-based revenue. It is a fundamentally different and potentially more durable economic model than traditional SaaS, and it is what makes the $30B run rate feel structural rather than inflated by consumer subscriptions.

The economics of Max are remarkable when you run the numbers. One developer tracked 10 billion tokens over eight months, which is the equivalent of $15,000+ at API rates for roughly $800 on the Max plan. At $200/month and an annual commitment, that is $2,400 per year for what, until recently, looked like effectively unlimited agentic compute.

Compare that to a junior software engineer at ~$100K+ base salary, benefits, and management overhead, and you start to understand why the enterprise demand signal has been so explosive. For a brief window, you could run Claude Max as a 24/7 autonomous software developer for roughly 2–3% of what a human would cost. That math did not last, and that level of compute will not go around for everybody.

The End of the All-You-Can-Eat Era

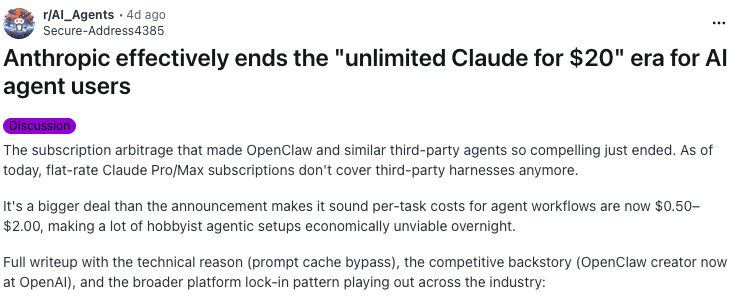

On April 4, 2026, Anthropic made a quiet but consequential change. Claude Pro and Max subscribers could no longer route their subscription usage through third-party agent frameworks like OpenClaw, an OS platform with an estimated 135K active instances at the time of the announcement. The company said the policy would extend to all third-party harnesses in the coming weeks.

The logic was straightforward: Anthropic’s own tools — Claude Code, Claude Cowork — are built to maximize prompt cache hit rates, reusing previously processed context to dramatically reduce compute overhead. Third-party harnesses largely bypass this optimization layer. A single heavy OpenClaw session could burn as much infrastructure as many standard Claude Code sessions at equivalent output volume.

Analysts estimated the gap between what heavy agentic users were paying under flat subscriptions versus what equivalent usage would cost at API rates was more than 5x. Anthropic was quietly cross-subsidizing a class of usage it had never intended to price for. For users who want to keep running Claude through third-party tools, the paths forward are: direct API billing at $3–$15 per million tokens (Sonnet) or $5–$25 per million (Opus), or a new “extra usage” pay-as-you-go layer billed on top of their subscription. Tasks through these channels are running roughly $0.50–$1.00 each at serious usage volumes. The flat-rate arbitrage is over.

This matters for a reason that goes beyond OpenClaw. It signals something structural about where Anthropic is heading: the company wants to own the customer relationship, the compute economics, and the distribution layer. The $100M committed to the Claude Partner Network in March, the launch of a controlled marketplace for Claude-powered apps, the blocking of third-party harnesses — these are not isolated decisions. They are the architecture of a platform company consolidating around its moat.

That platform ambition was reinforced just this week with the launch of Claude Managed Agents — a cloud service that handles the scaffolding (containers, state management, tool orchestration) developers previously had to build themselves, billed at $0.8 cents per agent runtime hour on top of model usage. It’s a direct play to own the agentic development layer, not just the model underneath it.

The Open-Source Wildcard

At the same time Anthropic is tightening its pricing grip, the open-source model ecosystem is becoming more capable than ever.

On April 2, 2026, Google DeepMind released Gemma 4 — a family of open-weight models ranging from 2B to 31B parameters under a fully permissive Apache 2.0 license. The 26B Mixture of Experts (MoE) variant activates only 3.8B parameters during inference, delivering near-frontier reasoning at a fraction of the latency and cost, while the dense models offer predictability for workloads that need it. Both feature 256K context windows, native function calling, agentic workflow support, and multimodal inputs — running on a single 80GB H100 GPU.

What makes Gemma 4 particularly relevant right now is the timing. As Anthropic tightens access for third-party harnesses like OpenClaw, a meaningful wave of those users are turning to Gemma 4 as their local alternative and are finding it surprisingly capable. The architecture was built for exactly this moment: intelligence per parameter, not intelligence per dollar of cloud spend. Sparse activation, efficient attention, and per-layer embedding tricks mean Gemma 4 can extract frontier-level reasoning from the hardware you already own.

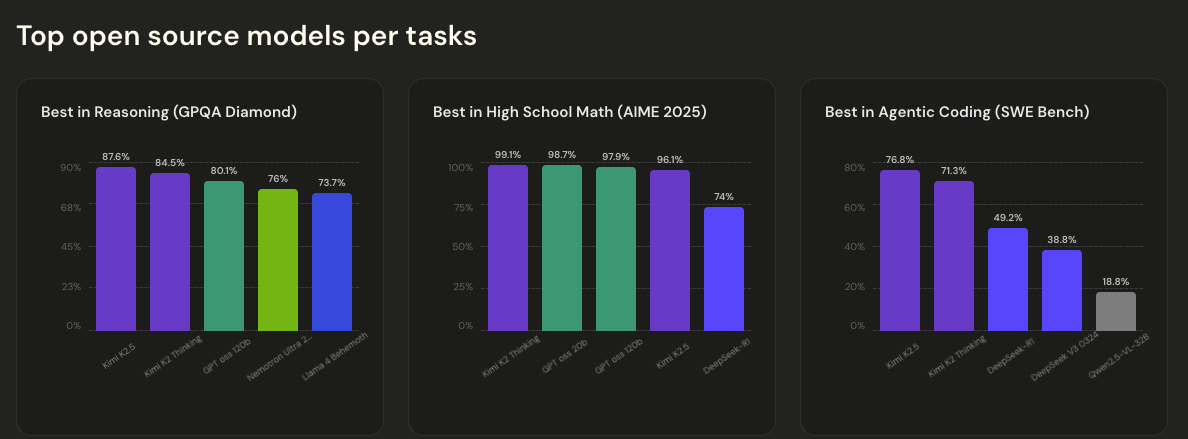

And Gemma may not be the sharpest edge of the open-source wave. The top spots on the open model leaderboard are dominated by Chinese models: Qwen 3.5 from Alibaba, GLM-5 from Zhipu AI, Kimi K2.5 from Moonshot AI. A 2026 Databricks survey found that more than three-quarters of enterprises are already running a mix of closed and open-source models.

The question is no longer whether Claude is the best model. It often still is for the most complex tasks. The question is whether you can afford to route everything through it.

Constraints as Architecture

This is where the pricing shift starts to look less like a tax and more like a forcing function — and paradoxically, a productive one.

When Claude Max was $200/month with no effective ceiling, developers built agents that were sloppy with compute. Why not? Spinning up a retry loop, letting agents chat freely back and forth, re-querying context you already had — none of it cost you anything you could measure. The subscription was a fixed overhead, and the marginal cost of another call was zero.

When every API call costs real money, that changes. You cannot afford agents that spin in retry loops or make redundant calls. You cannot afford multi-agent setups where the agents spend more time coordinating with each other than doing actual work. You have to think. The architectures that survive this environment share a common set of properties:

- Smart task decomposition. Break work into minimal subtasks so each model call does the least expensive thing possible. Not every step needs Opus.

- Caching and memoization. Don’t ask the model the same question twice, return the stored result as opposed to re-querying the output.

- Hierarchical delegation. Use a cheap, fast model. Haiku, or an open-source alternative for routing, triage, and simple lookups. Reserve Opus or Sonnet only for the tasks that genuinely require frontier reasoning.

- Early termination. Detect when a task is stuck and kill it before it burns through credits. The worst agentic systems are the ones that fail expensively.

Ironically, this might produce better agent systems. The constraint forces you to think about efficiency and coordination in ways that “unlimited” never did. The best multi-agent systems are the ones that minimize inter-agent communication, not the ones that let agents chat freely.

What This Means for Startups

There is a significant opportunity hiding inside this transition. For the last year, the venture-backed AI application layer has been built largely on a simple assumption: foundation model costs will stay low enough, or flat-rate enough, that you can build on top of them without worrying too much about the economics.

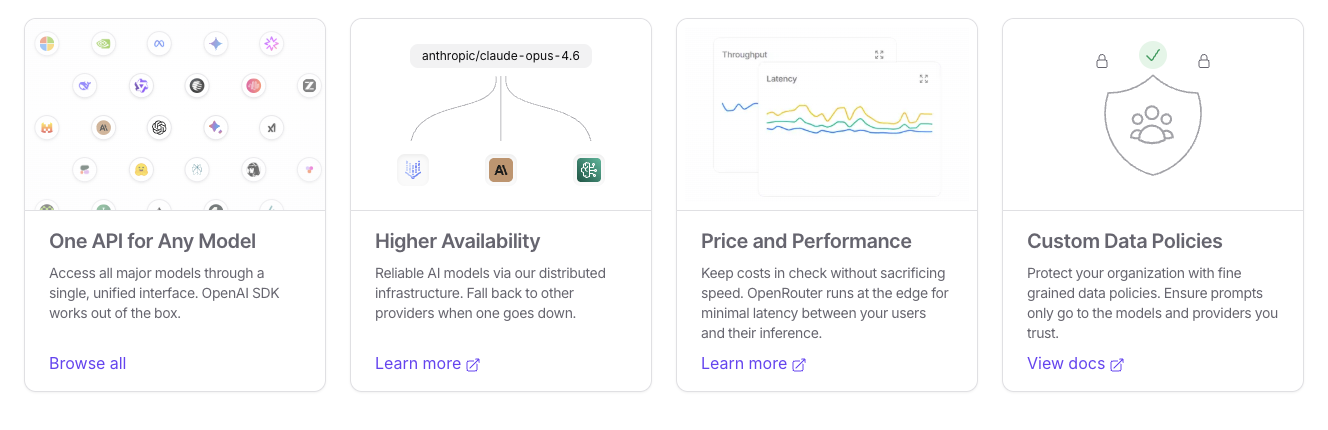

But, as Claude’s API pricing becomes real money at scale, and as Anthropic draws a harder line between its own products and the third-party ecosystem, a new design space opens up. Companies that build intelligent routing layers — systems that can dynamically decide which model to use for which task, optimizing cost against quality in real time — will have a structural advantage. Companies that deeply understand how both the open-source and closed-source models operate will have an advantage. Infrastructure for hybrid architectures that blend frontier APIs with self-hosted open models will become increasingly valuable. The “right model for the right task” is not just a technical principle, but it is a margin story.

The winners in the next phase of AI application development will not be the ones who picked the best single model. They will be the ones who built the most efficient orchestration layer around a heterogeneous mix and priced their own products accordingly.

In Conclusion…

Let us come back to the headline number. $30B in annualized revenue. Growing from $9B six months ago! With many of our startups, we’re talking in MILLIONS, and that’s IF they are successfully growing.

This sort of growth is real and extremely impressive. The enterprise signal — 1,000+ customers spending $1M+ annually is not hype. It reflects genuine adoption of Claude as infrastructure inside serious organizations. The compute deal with Google and Broadcom for multiple gigawatts of next-generation TPUs, coming online in 2027, only suggests that Anthropic expects this demand to continue accelerating.

But the pricing changes of the last few weeks also tell a different story about the margin structure underneath those numbers and the available compute. The flat-rate subscription that powered the “Claude as a $2,400/year software engineer” narrative is tightening. The open-source ecosystem is closing the capability gap. And the enterprise customers who are really spending are doing so through the API, where every token is metered.

This does not make Anthropic’s growth story false, but it makes it more interesting as we think about what’s to come. The question for the next twelve months is not whether Claude is good. It clearly is. The question is whether the moat is deep enough, and the pricing architecture disciplined enough, to sustain that $30B run rate as capable alternatives proliferate and the cost of intelligence continues to fall!

Buckle up :).