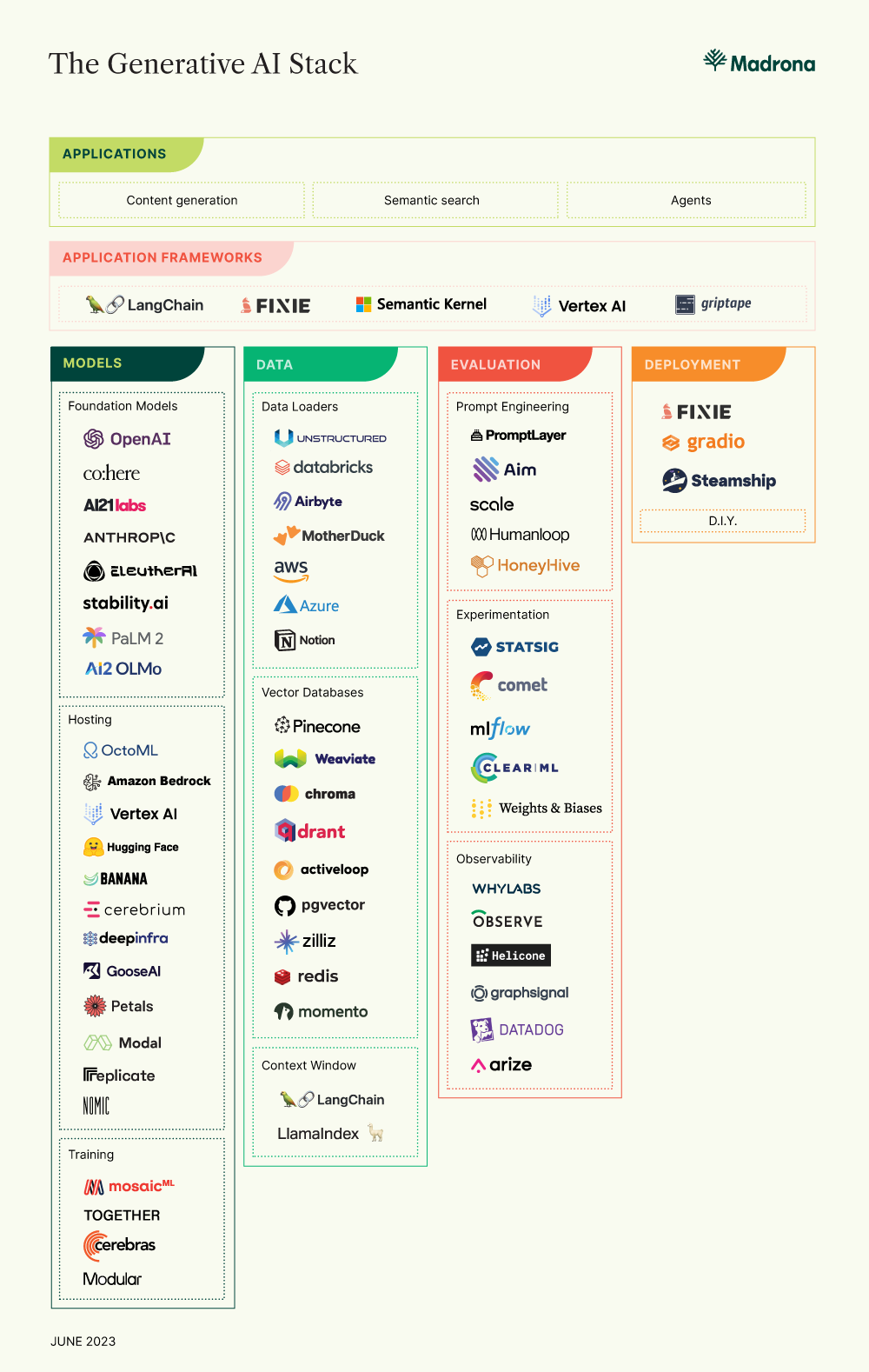

There is just so much happening in AI right now! Applications that used to take years to develop are now being built over weekend hackathons. It’s all a testament to the power of foundation models (we have not found the outer limits yet) and rapid innovation at the infrastructure layer to put that power in the hands of more developers. While this progress opens up exciting possibilities, it also poses challenges to developers who may find themselves overwhelmed by the myriad options available. Fortunately, as the ecosystem matures, we are beginning to see components of a new generative AI stack developing.

Application Frameworks

Application frameworks have emerged to quickly absorb the storm of new innovations and rationalize them into a coherent programming model. They simplify the development process and allow developers to iterate quickly.

Several frameworks have emerged, each building its own interchangeable and complementary ecosystem of tools. LangChain has become the developer community’s open-source focal point for building with foundation models. Fixie is building an enterprise-grade platform for creating, deploying, and managing AI agents. Cloud providers are also building application frameworks, such as Microsoft’s Semantic Kernel and Google Cloud’s Vertex AI platform.

Developers are using these frameworks to create applications that generate new content, create semantic systems that allow users to search content using natural language, and agents that perform tasks autonomously. These applications are already fundamentally changing the way we create, the way we synthesize information, and the way we work.

The tooling ecosystem makes it possible for application developers to more easily bring their visions to life by leveraging the domain expertise and understanding of their customers without necessarily needing the technical depth required at the infrastructure level. Today’s ecosystem can be broken into four parts: Models, Data, Evaluation Platform, and Deployment.

Models

Let’s start with the foundation model (FM) itself. FMs are capable of human-like reasoning. They are the “brain” behind it all. Developers have several FMs to choose from, varying in output quality, modalities, context window size, cost, and latency. The most optimal design often requires developers to use a combination of multiple FMs in their application.

Developers can select proprietary FMs created by vendors like Open AI, Anthropic, or Cohere or host one of a growing number of open-source FMs. Developers can also choose to train their own model.

- Foundation Models: Developers can select models built by providers.

- Hosting: Developers looking to use an open-source model (e.g., Stable Diffusion, GPT-J, FLAN T-5, Llama) can select one of the following hosting services. New advancements made by companies like OctoML allow developers to not only host models on the server but also deploy them on edge devices and even the browser. This not only improves privacy and security but also reduces latency and cost.

- Training: Developers can train their own language model using a variety of emerging platforms. Several of these teams have built open-source models developers can leverage out of the box.

Data

LLMs are a powerful technology. But, they are limited to reasoning about the facts on which they were trained. That’s constraining for developers looking to make decisions on the data that matters to them. Fortunately, there are mechanisms developers can use to connect and operationalize their data:

- Data Loaders: Developers can bring in data from various sources. This includes data loaders from structured data sources like databases and unstructured data sources. Enterprise customers are building personalized content generation and semantic search applications with unstructured data stored in PDFs, documents, powerpoints, etc., using sophisticated ETL pipelines built by Unstructured.io.

- Vector Databases: When building LLM applications — especially semantic search systems and conversational interfaces — developers often want to vectorize a variety of unstructured data using LLM embeddings and store those vectors so they can be queried effectively. Enter vector databases. Vector stores vary in terms of closed vs. open source, supported modalities, performance, and developer experience. There are several stand-alone vector database offerings and others built by existing database systems.

- Context Window: Retrieval augmented generation is a popular technique for personalizing model outputs by incorporating data directly in the prompt. Developers can achieve personalization without modifying the model weights through fine-tuning. Projects like LangChain and LlamaIndex provide data structures for incorporating data into the model’s context window.

Evaluation Platform

LLM developers face a tradeoff between model performance, inference cost, and latency. Developers can improve performance across all three vectors by iterating on prompts, fine-tuning the model, or switching between model providers. However, measuring performance is more complex due to the probabilistic nature of LLMs and the non-determinism of tasks.

Fortunately, there are several evaluation tools to help developers determine the best prompts, provide offline and online experimentation tracking, and monitor model performance in production:

- Prompt Engineering: There is a variety of No Code / Low Code tooling that helps developers iterate on prompts and see a variety of outputs across models. Prompt engineers can leverage these platforms to dial in on the best prompts for their application experience.

- Experimentation: ML engineers looking to experiment with prompts, hyperparameters, fine-tuning, and the models themselves can track their experiments using a number of tools. Experimental models can be evaluated offline in a staging environment using benchmark data sets, human labelers, or even LLMs. However, offline methodologies can only take you so far. Developers can use tools like Statsig to evaluate model performance in production. Data-driven experimentation and faster iteration cycles are critical to building defensibility.

- Observability: After deploying an application in production, it is necessary to track the model’s performance, cost, latency, and behavior over time. These platforms can be used to guide future prompt iteration and model experimentation. WhyLabs recently launched LangKit, which gives developers visibility into the quality of model outputs, protection against malicious usage patterns, and responsible AI checks.

Deployment

Finally, developers want to deploy their applications into production.

Developers can self-host LLM applications and deploy them using popular frameworks like Gradio. Or, developers can use third-party services to deploy applications.

Fixie can be used to build, share, and deploy Agents in production.

The Future is still being built

Last month we hosted an AI meetup at the OctoML headquarters in Seattle, and like the meetups in other cities, we were overwhelmed by the number of attendees and the quality of their demos. We are astonished at how quickly the science and tooling ecosystems are developing and are excited by the new possibilities that will be unlocked.

Some areas that we’re particularly excited about are no-code interfaces that bring the power of foundation models to more builders, the latest advancements in security for LLMs, better mechanisms to control and monitor the quality of model outputs, and new ways to distill models to make them cheaper to run in production.

For developers building in or learning more about the rapidly evolving ecosystem, please reach out at [email protected].